#BrainUp Daily Tech News – (Sunday, March 1ˢᵗ)

Welcome to today’s curated collection of interesting links and insights for 2026/03/01. Our Hand-picked, AI-optimized system has processed and summarized 23 articles from all over the internet to bring you the latest technology news.

As previously aired🔴LIVE on Clubhouse, Chatter Social, Instagram, Twitch, X, YouTube, and TikTok.

Also available as a #Podcast on Apple 📻, Spotify🛜, Anghami, and Amazon🎧 or anywhere else you listen to podcasts.

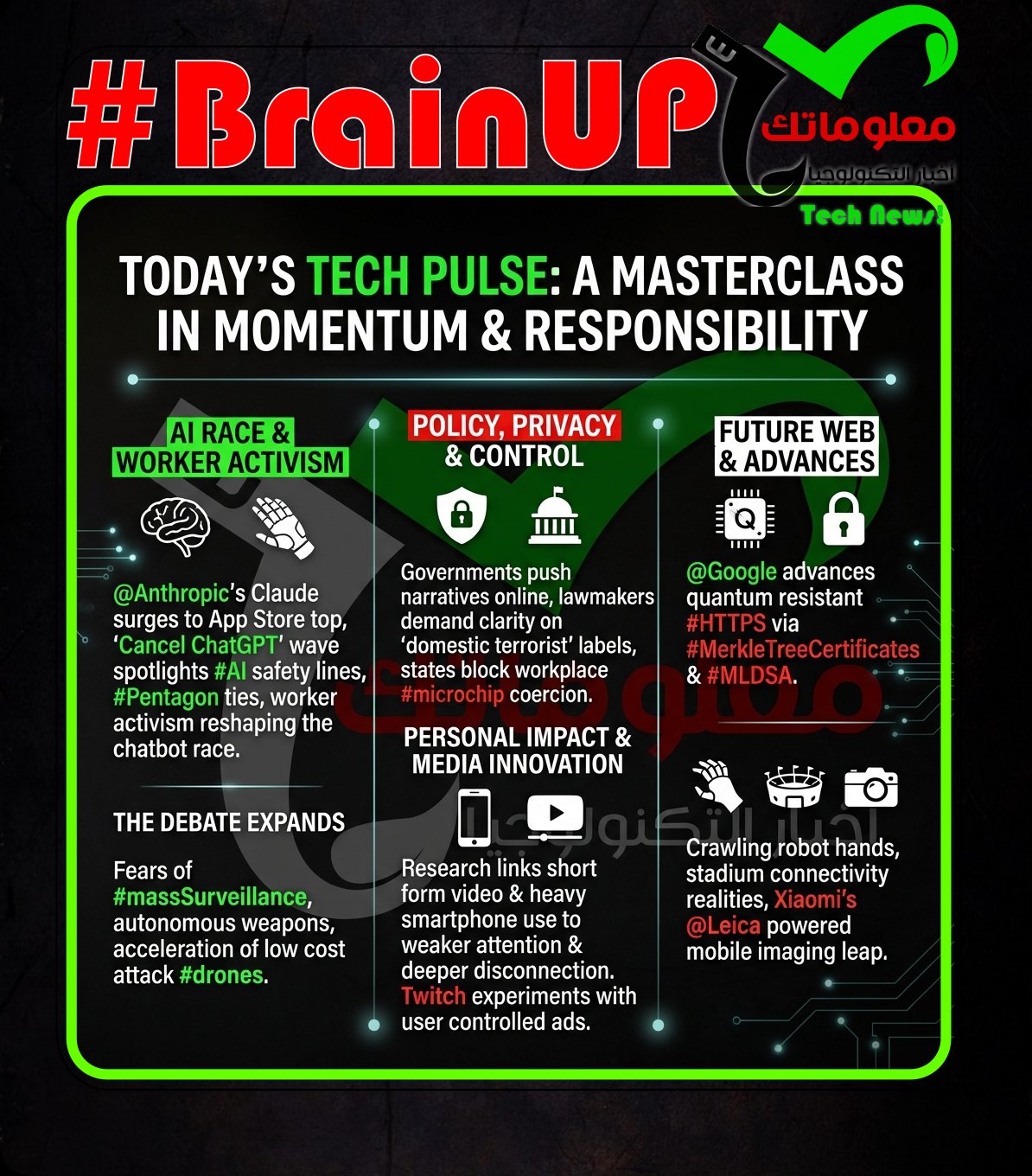

@Anthropic’s Claude rose to No. 1 among the most downloaded productivity apps on Apple’s App Store as some @OpenAI ChatGPT users said they were canceling in reaction to @OpenAI’s new #Pentagon agreement. @Sam Altman announced a deal with the Department of Defense to deploy #AI models in its classified network, and @OpenAI said the agreement emphasizes human oversight of autonomous weapons and limits domestic mass surveillance. Users posted screenshots documenting switches, including @Katy Perry sharing Claude’s $20-per-month Pro plan and others showing Anthropic receipts and OpenAI cancellation confirmations, while “Cancel ChatGPT” gained traction on Reddit and X. The reaction was not universal, with some commenters saying the news would not change their choice and pointing to #Anthropic’s prior defense-related work, including a 2024 agreement with #Palantir and #AWS to provide US intelligence and defense agencies access to Claude models. The episode underscores how differing perceptions of defense ties and safety commitments are reshaping the #chatbot wars, with @OpenAI gaining ground in Washington and @Anthropic gaining momentum with users.

2. “Cancel ChatGPT” movement goes big after OpenAI’s latest move

The article argues that a “Cancel ChatGPT” backlash is gaining momentum because @OpenAI, led by @Sam Altman, is aligning with the U.S. government after @Anthropic refused to support certain military and surveillance uses of its AI. It says @Anthropic drew two red lines, no #autonomous weapons use and no #mass surveillance of U.S. citizens, and was then labeled a supply chain risk and banned from U.S. government agencies. In contrast, it claims @Altman pledged #ChatGPT and other OpenAI technologies to the U.S. “Department of War,” stating on X the models would not be used for mass surveillance, while a U.S. official reportedly countered they would be used by “all lawful means,” which the article links to the #PatriotAct and post-9/11 authorities around bulk metadata collection. The piece frames this as a choice between insisting on usage controls versus deferring to government legal interpretations that could permit or enable surveillance in some scenarios. It ties these events to broader criticism that the #AI race lacks moral leadership and that large language models are built on extensive scraped data while threatening jobs and social stability.

3. Employees at Google and OpenAI support Anthropic’s Pentagon stand in open letter | TechCrunch

@Anthropic is at a stalemate with the U.S. Department of War over a request for unrestricted access to its AI technology, and is refusing uses it says would enable #mass_domestic_surveillance or #fully_autonomous_weaponry. As a Pentagon deadline approaches, more than 300 @Google employees and over 60 @OpenAI employees signed an open letter urging their leaders to back Anthropic and collectively uphold these red lines. The letter argues the Department is trying to divide companies by implying others might give in, and asks executives to stand together to refuse the current demands. Company leadership has not formally responded, but @Sam_Altman said he does not think the Pentagon should be threatening DPA action against AI companies, and an OpenAI spokesperson reportedly affirmed alignment with Anthropic’s red lines. @Jeff_Dean, speaking individually, also criticized mass surveillance as violating the Fourth Amendment and chilling free expression, while noting surveillance systems can be misused politically or discriminatorily.

4. YouTube Shorts and Instagram Reels are making you dumber, according to science – Dexerto

Scientists at China’s Zhejiang University report that frequent consumption of short-form videos like #TikTok, #InstagramReels, and #YouTubeShorts is associated with poorer attention and weaker executive control. In a 2024 study published in #Frontiers, 48 young adult participants completed questionnaires on addictive short-video use and self-control, plus measures of impulsivity and attention, then performed an Attention Network Test while their brain activity was recorded via #EEG. Higher reported short-form use correlated with lower self-control, worse focus scores, and weaker frontal midline activity in the prefrontal cortex region tied to focus and control. The authors interpret these results as evidence that short-video addiction tendencies may diminish self-control and attentional executive control, and they emphasize the need for interventions to mitigate short-video addiction. The article notes this is correlational rather than proof that apps “destroy” the brain, and cites a 2025 @AmericanPsychologicalAssociation study reporting similar links between social media use and reduced attention and inhibitory control.

5. Press Pause: Watch Ads. Twitch Launches New Ad Test

Twitch is testing a new advertisement format that allows viewers to choose when to watch ads by pressing a ‘Press Pause’ button. This initiative aims to improve user experience by giving viewers control over ad exposure while still supporting streamers financially. Evidence shows that streamers rely on ad revenue, but excessive ads can lead to viewer dissatisfaction and attrition. By offering a voluntary ad-watching option, Twitch seeks to balance monetization with user engagement and retention. This approach aligns with broader #ads policies promoting user choice and platform sustainability, potentially setting a precedent for other streaming services.

A study published in Addictive Behaviors suggests a vicious daily cycle between excessive smartphone use and feeling disconnected. Evidence from college students indicates that when they reach for their phones for relief, they tend to feel more detached the following day. This implies that using smartphones to cope with negative feelings may backfire by increasing social or emotional disconnection later. The findings connect excessive smartphone use with next-day detachment, indicating the behavior and the feeling can reinforce each other over time.

7. Warning: Facebook Ads for Free Windows 11 Upgrade Will Infect PCs With Malware

Security researchers warn that malicious Facebook ads promising a free upgrade to #Windows11 are circulating and will infect computers with malware when users download the installers promoted by these ads, exploiting the appeal of free OS upgrades to trick people into executing harmful code that can compromise systems and steal data. These deceptive ads often link to unofficial download pages that host backdoor-laden installers rather than legitimate Microsoft software, and once run, the malware can install persistent threats like remote access tools, keyloggers or cryptominers. Experts emphasise that the only safe way to upgrade Windows is through official Microsoft channels such as Windows Update or verified ISO downloads from microsoft.com, and urge users to avoid third-party offers seen on social media that exploit trusted brands and upgrade incentives. The incident highlights how #SocialMedia advertising can be manipulated for widespread malware distribution by coupling attractive offers with low-effort credibility cues, and reinforces guidance to scrutinise download sources and maintain up-to-date antivirus protection to guard against these common but dangerous threats.

8. WA lawmakers advancing bill restricting employers from microchipping workers

Washington lawmakers are advancing #HB2303 to restrict employers from requiring or pressuring workers to get #subdermal #microchip implants, even though there are no known U.S. cases of companies mandating such implants. The bill, sponsored by @Brianna Thomas, has passed the House and moved out of the Senate Labor and Commerce Committee with bipartisan support, with limited testimony or debate. Supporters say the measure protects civil rights and workers’ rights by preventing invasive workplace tracking and surveillance and argue that real consent is impossible given workplace power dynamics, while allowing exceptions for medical microchips. Critics including @Joel McEntire warn it could be government overreach by being so restrictive that employers and employees could not even discuss or request the technology. The proposal is framed as a preemptive safeguard focused on employer conduct, not on what people choose to do outside of work.

9. DeepSeek to Release Long-Awaited AI Model in New Challenge to US Rivals

According to reporting by the Financial Times, Chinese AI company @DeepSeek is preparing to launch its much-anticipated new large language model V4 next week, marking its first major update since the R1 model and signalling fresh competition for U.S. frontier #AI labs; early commentary suggests V4 will offer multimodal capabilities such as image and potentially video generation alongside text, and may prioritise optimisation on domestic hardware including native Chinese chips, reflecting a strategic push toward greater efficiency and reduced reliance on Western compute ecosystems. The rollout comes amid heightened global interest in generative models that can rival those from major American labs both in performance and versatility, and observers say DeepSeek’s progress underscores how international AI innovation paths are diverging as companies outside the U.S. focus on architectural advances and local optimisation rather than sheer brute-force compute. The model’s release could reshape competitive dynamics, especially if it delivers strong performance at lower computational cost, intensifying debates over AI infrastructure investment, national technology leadership, and how global labs position themselves against each other in a rapidly evolving landscape.

10. Meet the “Enshittificator,” the Man Whose Job Is Making Your Apps Worse

The article profiles a software designer nicknamed the “Enshittificator,” a tongue-in-cheek title for someone whose job becomes deliberately degrading user experiences in apps by prioritising engagement metrics over actual usability, often using #AI-driven optimisation to push addictive features, dark patterns and intrusive monetisation that keep users hooked while undermining enjoyment and clarity. It explores how product teams increasingly rely on data signals and automated experimentation to squeeze more time and attention out of users by tweaking interfaces, notifications and recommendation engines so that they maximise short-term engagement even if the result feels frustrating, cluttered or less intuitive, turning many formerly straightforward apps into more complex and coercive environments. The piece argues that this dynamic reflects broader industry incentives where growth and retention metrics overshadow user satisfaction, and that #AI tools — when used without ethical guardrails — can accelerate the process by testing countless variations and learning which worst-quality tweaks keep people scrolling. Interviews with designers and critics describe the tension between business goals and user-centered design, suggesting a cultural shift in tech where optimisation often equates to making apps “worse” on purpose, and raising questions about how to balance profitability with respect for users’ time and mental wellbeing. The Enshittificator concept captures a growing frustration that tech products are being shaped not for better experiences but for better data points at the expense of user happiness.

11. Polymarket defends its decision to allow betting on war as ‘invaluable’

Polymarket is defending its choice to let users bet on events tied to war, even as real-world violence has occurred and people have died after a reported US strike on Iran. The article notes that the platform had been offering markets on when the US would strike Iran next, and that it has faced earlier controversies, including suspicions of insider trading related to the Super Bowl halftime show and a market about the capture of Venezuelan President Nicolás Maduro. In a statement on its site, Polymarket argued that #predictionmarkets can “harness the wisdom of the crowd” to produce accurate, unbiased forecasts, calling this especially “invaluable” during “gut-wrenching times,” and it criticized traditional media and @ElonMusk’s X as less able to provide needed answers. Polymarket said it discussed the situation with people directly affected by the attacks, who had many questions, and concluded that prediction markets could answer them in ways TV news and X could not. The Verge reports it has asked Polymarket to clarify its policies on betting involving violence, suffering, war, and death.

12. We don’t have to have unsupervised killer robots

As the US #DepartmentOfDefense pressures @Anthropic to loosen #AI safety guardrails for uses including #massSurveillance and fully autonomous lethal weapons, tech workers across the industry are questioning what their companies’ defense contracts are enabling. The article describes a Pentagon ultimatum that could label Anthropic a “supply chain risk” if it refuses, while reporting that #OpenAI and #xAI had already agreed to similar terms, and that employees at firms like #AmazonWebServices, #Microsoft, and #Google feel betrayed and expect little internal pushback. @DarioAmodei says Anthropic will not “in good conscience” grant unchecked access today, citing current unreliability, while offering to collaborate with the DoD on R&D to improve system reliability, an offer he says has not been accepted. Organized groups representing 700,000 tech workers have signed a letter urging companies to reject the Pentagon’s demands, even as major AI and cloud companies have loosened policies and expanded military and intelligence work, including OpenAI removing a ban on “military and warfare” uses and some firms working with #ICE. Overall, the piece links workers’ moral alarm to an AI industry and government relationship that is rapidly normalizing fewer restrictions on military applications, raising fears of unsupervised “killer robots” and broader surveillance.

US Central Command says its new Task Force Scorpion Strike used #low-cost one-way attack drones in combat for the first time during Saturday strikes on Iran, employing the Low-Cost Unmanned Combat Attack System, or LUCAS, which is modeled after Iran’s Shahed design. CENTCOM said the drones are delivering “American-made retribution,” and noted the task force and the US military’s first one-way attack drone squadron in the Middle East were only established in December. Developed by SpektreWorks, the LUCAS is a #loitering munition that can be launched from catapults, vehicles, and mobile ground stations, has rocket-assisted takeoff, and can linger over an area before diving into a target and exploding. The strike package also included Israeli fighter jets, US warships firing Tomahawk cruise missiles, and US ground forces using #HIMARS, plus other undisclosed standoff weapons and air assets in an operation dubbed “Epic Fury.” The article frames LUCAS as part of the Pentagon push, prioritized under the @Trump administration, to field inexpensive uncrewed systems to keep pace with Russia and China, after Shahed-type drones have been heavily used in Ukraine and by Iran-backed militants in the Middle East.

14. APT37 Hackers Use New Malware to Breach Air-Gapped Networks

Researchers warn that the North Korea-linked threat group APT37 is deploying a new strain of sophisticated malware capable of pivoting into air-gapped networks — systems that are physically isolated from the internet to protect sensitive infrastructure — by exploiting removable media and firmware vulnerabilities before establishing covert communication channels that evade traditional detection. The malware leverages advanced techniques such as #Firmware manipulation and staged payloads that lie dormant until specific conditions are met, enabling it to spread from initially infected host machines into segregated environments once physical access occurs, raising alarm among cybersecurity professionals because air-gapped systems are widely used in critical sectors like defense, energy and industrial control to minimise remote attack risk. This evolution in tactics underscores how persistent threat actors continue to innovate around security perimeters previously considered strongholds, combining social engineering, supply chain compromise and low-and-slow execution to undermine isolation guarantees. Experts urge organisations to bolster physical security practices, enforce strict controls on removable media, apply firmware integrity monitoring and adopt layered detection to counter threats that can infiltrate even supposedly protected networks. The findings highlight that in today’s threat landscape, isolation alone is no longer sufficient without rigorous endpoint and hardware-level defence measures against well-resourced attackers.

15. FDA Examining Bonus Payments to Drug Reviewers After Makary Raises Concerns

The U.S. Food and Drug Administration is reviewing whether the bonus payment structure for drug reviewers could be influencing approval decisions after public health expert @Martin Makary and others raised alarms that performance-based bonuses tied to review speed and approval metrics might incentivise faster clearances rather than thorough safety evaluation. The agency is looking at whether current bonuses inadvertently reward speedy decisions ahead of deeper scrutiny, especially amid heightened public concern about drug safety and the integrity of regulatory science. FDA officials denied that bonuses dictate outcomes but acknowledged the need to assess whether compensation models align with public health priorities, transparency and accountability. The review highlights ongoing debates about how to balance efficiency with rigorous safeguards in drug oversight, and underscores broader tensions around trust in regulatory institutions tasked with protecting patients while facilitating medical innovation.

16. Google quantum-proofs HTTPS by squeezing 15kB of data into 700-byte space

@Google plans to make #HTTPS certificate verification resilient to quantum attacks without slowing the web by replacing traditional serialized certificate chains with #MerkleTreeCertificates that prove inclusion in a logged set using compact data. Today’s typical #X509 certificate chain is about 4kB and relies on elliptic curve signatures and keys that could be broken by #ShorsAlgorithm, while quantum resistant material needed for transparent publication can be about 40 times larger, risking slower TLS handshakes and breakage in network “middle boxes,” as @Cloudflare’s Bas Westerbaan warned. The approach has a CA sign a single Merkle tree head for potentially millions of certificates, and the browser receives only a lightweight proof that the certificate is included in an append-only #CertificateTransparency log, reducing the amount of post-quantum data that must be transmitted. To prevent quantum enabled forgery of signed certificate timestamps and related log proofs, Google is adding post-quantum cryptography such as #MLDSA so an attacker would have to break both classical and post-quantum schemes, as part of a “quantum-resistant root store” that complements the 2022 Chrome Root Store. The system is already implemented in #Chrome, and Cloudflare is beginning rollout by enrolling around 1,000 TLS certificates, aiming to keep effective certificate payloads roughly near current sizes rather than ballooning.

17. How the federal government is painting immigrants as criminals on social media

The article describes an aggressive federal social media campaign that portrays immigrants detained in a new immigration crackdown as dangerous criminals, which experts say is unprecedented and distorts the relationship between immigration and crime. It opens with the case of At “Ricky” Chandee, a refugee arrested by #ICE, whose image was misidentified on the White House X account and whose record was overstated as multiple felonies even though he has a single 1993 second degree assault conviction, after which he served three years and has had no further incidents while checking in with immigration authorities for over 30 years. The government has nevertheless moved to deport him to Laos, despite his lawyer saying removal had previously been deemed infeasible, and local officials described him as a longtime City of Minneapolis employee and questioned why he was targeted. NPR reports that White House, #DHS, and other agency accounts have spent much of the past year posting near daily about detained people, and that during @Donald Trump’s second term the DHS and ICE X accounts posted about more than 2,000 people tied to mass deportation efforts. While ICE data show over 70% of detainees lack criminal records, NPR’s review of 130 Minnesota cases found many had serious recent records but about a quarter resembled Chandee, involving decades old convictions, minor offenses, or only pending proceedings, supporting concerns that the online messaging selectively amplifies criminality to frame the broader crackdown.

18. Lawmakers Demand DHS Define ‘Domestic Terrorist’ As It Uses Vast Array of Surveillance Tools

More than a dozen Democratic lawmakers led by @Bennie G. Thompson demanded that the Department of Homeland Security explain how it defines a “domestic terrorist” and what policies allow it to label U.S. citizens that way, after DHS labeled Renée Good and Alex Pretti, whom DHS officers killed, as domestic terrorists. In a letter to DHS Secretary @Kristi Noem, they argue DHS is applying the label broadly “at will without evidence” while expanding #surveillance capabilities and seeking sensitive information from tech companies to identify people criticizing ICE. The letter cites reports that DHS urged staff to collect protester images and license plates, that #Palantir is building an ICE tool called #ELITE to score address confidence, that ICE bought smartphone location data, and that agents said they used #facial recognition to identify legal observers. Lawmakers describe this as an opaque, self-reinforcing cycle that erodes civil liberties and pushes the country toward an authoritarian #surveillance state that punishes dissent. They request documentation of the “domestic terrorist” definition, the legal and technical regimes governing DHS surveillance tools, and an explanation of the consequences of such designations, warning that “Accountability is coming.”

19. Open-source AI Tool Beats Giant LLMs in Literature Reviews and Gets Citations Right

Researchers have developed an open-source AI tool that outperforms large proprietary #LLMs in generating academic literature reviews by producing summaries that are not only coherent but also accurately cite sources, addressing a major shortcoming of many generative models that invent or misattribute references. This tool can be run on researchers’ own computer systems without relying on commercial cloud services, making it cheap, transparent and controllable, and allowing teams to inspect how the model works, reproduce results and avoid “hallucinated” citations that plague off-the-shelf systems. The development reflects a broader push in science to build AI tools that meet the exacting standards of academic workflows — including verifiable sourcing, traceable decisions and reproducibility — rather than purely conversational prowess. By prioritising factual grounding and citation integrity, the open-source model helps scholars accelerate literature surveys, synthesize findings and identify key trends without sacrificing reliability, and highlights how community-driven AI innovation can carve out niches that serve specialist domains better than general-purpose commercial alternatives. The approach also emphasises transparency, local deployment and researcher control as valuable properties in AI tools for science, especially in contexts where accuracy and accountability matter most.

20. The Week the Dreaded AI Jobs Wipeout Got Real

This Wall Street Journal story captures a turning point in the U.S. jobs market as @Jack Dorsey’s fintech company, Block, cut about 4,000 jobs — nearly half its workforce — explicitly citing the productivity gains from #AI tools as a key reason and igniting widespread concern that advanced automation is now driving actual layoffs rather than just long-term industry change. The announcement triggered backlash among tech workers and executives, with leaders from major firms like @Meta and @Amazon publicly debating how #AI will reshape the workforce and large-scale employment, and surveys showing growing public anxiety that automation could displace a broad swath of white-collar roles, from engineers to analysts. Critics see Block’s cuts as a stark sign that companies are willing to rely on AI to streamline teams and reduce headcount, while supporters argue that efficiency and new AI-related roles may ultimately emerge even as legacy jobs shrink. The shift in corporate discourse — from celebrating AI’s benefits to openly acknowledging its disruptive risks — suggests that the debate over automation’s economic and social impact is entering a more urgent phase where workforce transition policy and reskilling efforts will become central to how society adapts to AI-driven disruption.

21. This robot hand can detach from its arm and crawl around

Engineers in Switzerland built a detachable, spider-like #robot hand that can leave a mounted arm, crawl using its fingers, and manipulate objects with a fully symmetrical layout designed to avoid limitations of the human hand. In demonstrations described in Nature Communications at the Swiss Federal Institute of Technology Lausanne, it flips to grasp items on either side of its palm, carries objects on its back, and can hold multiple items at once, up to three objects with a combined weight of about five pounds, while reproducing 33 human grasp types. The researchers argue that symmetry, five fingers, and dual thumbs provide forward and backward flexibility, and that being detachable from an arm expands functionality compared with the human hand’s asymmetry, single opposable thumb, and fixed attachment. The design also draws inspiration from animals like octopuses and certain insects that move and manipulate simultaneously, and parallels robotic examples like Boston Dynamics’ Spot using limbs for both locomotion and handling. To create the hand, the team built a digital library of human grasp postures and used an algorithm to optimize motion and finger count, noting that adding fingers can increase mass, collisions, and clumsiness.

22. Why you can’t get a signal at festivals and sports matches

Big sports and cultural events often have poor connectivity because #mobile and #wi-fi networks face extreme, short-lived demand in venues that are physically hostile to radio signals. At Everton’s Hill Dickinson Stadium, built with HPE Aruba, the system handles about 11Gb inbound and outbound traffic, transfers 205TB on matchdays, supports 18,000 simultaneous #wi-fi connections, and uses a #DAS to boost mobile coverage, enabling services from broadcasters and emergency teams to ticketing and cashless payments. Elite competitions also drive extraordinary #bandwidth needs, with a Champions League final using 40+ cameras that can each require around 1.5Gbps, far above Ofcom’s “decent” home broadband benchmark of 10Mbps down and 1Mbps up. Older venues and surrounding areas struggle because steel, concrete, and dense crowds degrade signals, demand spikes sharply at moments like half time, and congestion can spill beyond the stadium as thousands share limited local capacity. Operators are rolling out #5G and #5GSA to increase capacity, but infrastructure upgrades can be slowed by planning objections, so improvements arrive gradually and are especially hard for temporary events like festivals.

23. Xiaomi launches 17 Ultra smartphone, an AirTag clone, and an ultra slim powerbank | TechCrunch

Ahead of #MWC in Barcelona, Xiaomi announced multiple new devices led by the camera-focused Xiaomi 17 Ultra, alongside an AirTag-style tracker, the Xiaomi Watch 5 smartwatch, and an ultra slim power bank. Co-branded with @Leica, the 17 Ultra uses Leica lenses and Leica-style filters, featuring a 50MP 1-inch main sensor, a 200MP telephoto with variable 75mm to 100mm equivalent focal length for 3.2x to 4.3x optical zoom, and a 50MP ultrawide camera. It also includes a 6.9-inch HyperRGB OLED display with Shield Glass 3.0, a 6,000 mAh battery (6,800 mAh in China), 90W USB PD-PPS charging, 50W Hypercharge wireless charging, and the Snapdragon 8 Elite Gen 5 processor. Xiaomi is releasing a special @Leica 100-year edition with an aluminum-alloy body, a rotating ring that mimics camera zoom, and a “Leica Essential mode” recreating Leica M9 and M3 looks, plus optional 17 Ultra Photography Kit accessories that add physical-style controls and, in the Pro version, a USB-C snap-on with a 2,000 mAh battery and a new fastshot mode. Xiaomi is bringing the phones to the EU and UK, pricing the Xiaomi 17 from €999, the 17 Ultra from €1,499, the Leica edition at €1,999 (16GB RAM, 1TB storage), and the 17 Ultra Photography Kit at €99.99.

That’s all for today’s digest for 2026/03/01! We picked, and processed 23 Articles. Stay tuned for tomorrow’s collection of insights and discoveries.

Thanks, Patricia Zougheib and Dr Badawi, for curating the links

See you in the next one! 🚀